AMD ROCm™ Open Software Platform

Enterprise AI stack built on AMD ROCm

Libraries · Compilers · Runtimes · Development tools

Linux · Containers · Cluster integration

Need help with AMD ROCm-based development?

Data Monsters is your best choice. We are an AMD partner who helps funded startups and enterprise R&D teams design and implement ROCm-based AI software and hardware solutions.

With 17+ years in AI, hundreds of completed projects, and deep AMD expertise, we are ready to accelerate your AI product.

Work with us

17+ years

80+ experts

150+ projects

What is AMD ROCm™?

AMD ROCm™ (Radeon Open Compute) is a fully open-source, Linux-based GPU computing platform engineered for high-performance AI training, deep learning inference, and scientific computing. It provides a complete, production-ready software stack — from low-level GPU drivers and runtime libraries up to popular ML frameworks — all under a permissive open-source license.

ROCm 7, the latest major release, delivers over 3.5× the inference capability and 3× the training performance of ROCm 6. It adds full support for AMD Instinct™ MI350 Series GPUs, distributed inference (decoupled prefill/decode for reasoning models), new FP4/FP6 precision types, and native Kubernetes/MLOps integration for enterprise deployments.

The platform's cornerstone is HIP (Heterogeneous-computing Interface for Portability) — a C++ runtime API and kernel language that lets developers write once and run on both AMD and NVIDIA hardware, dramatically reducing vendor lock-in.

AMD Instinct™ & ROCm™ in Action

AMD VP of AI Software explains ROCm momentum, Llama 4 / DeepSeek support, and the developer-first approach at Advancing AI 2025

Key Components of AMD ROCm™

ROCm is a modular, full-stack platform. Each layer delivers specific capabilities — from hardware drivers to production ML deployment.

HIP – GPU Portability Layer

HIP (Heterogeneous-computing Interface for Portability) is AMD's C++ runtime API that lets developers write GPU code once and deploy on both AMD and NVIDIA hardware. Automated hipify tools migrate existing CUDA codebases in hours, not months.

MIOpen – Deep Learning Primitives

AMD's GPU-optimized library for deep learning operations – convolutions, activation functions, batch normalization, and more. MIOpen delivers highly optimized kernels for training and inference across all AMD Instinct GPUs.

rocBLAS & rocSPARSE

High-performance Basic Linear Algebra Subprograms (BLAS) and sparse matrix operations optimized for AMD architectures. These form the computational backbone for LLM inference, transformer models, and scientific simulations.

ROCm SMI & rocProfiler

System Management Interface for monitoring GPU health, utilization, temperature, and power consumption. rocProfiler and rocDebugger provide deep performance profiling and hardware-level debugging for GPU kernels.

Distributed Inference

New in ROCm 7 – decoupled prefill and decode phases for AI reasoning models. This disaggregated inference approach (similar to NVIDIA Dynamo) dramatically reduces token generation cost for high-volume LLM serving workloads.

ROCm Enterprise AI

A free MLOps and cluster management platform launched with ROCm 7, featuring model fine-tuning, Kubernetes RBAC integration, role-based access control, and observability hooks. Available at no cost – unlike proprietary enterprise AI stacks.

Why ROCm is the Best Open AI Development Framework

From hardware to application, ROCm provides a vertically integrated, fully open stack – no black boxes, no vendor lock-in

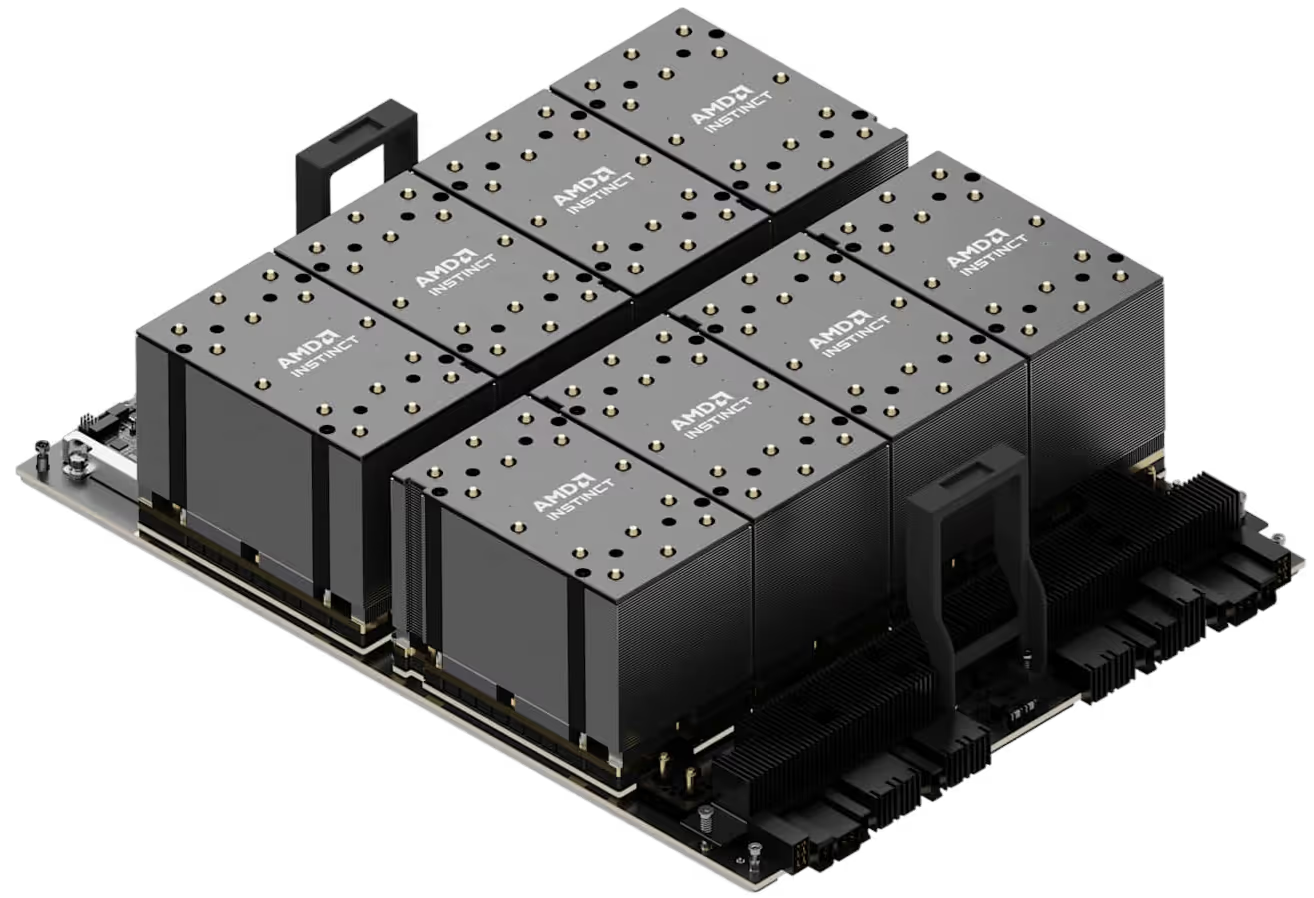

AMD Instinct™ Hardware

MI350 Series · MI325X · MI300X · MI300A (APU)

Kernel Driver (AMDGPU)

Linux kernel module · XGMI / Infinity Fabric support

ROCm Runtime

HSA Runtime · Memory management · Kernel dispatch

HIP Runtime & Compiler

HIP 7.0 · ROCm-LLVM · AOMP · OpenCL · OpenMP

AI & Math Libraries

MIOpen · rocBLAS · rocSPARSE · MIGraphX · hipFFT

ROCm Enterprise AI

MLOps · Kubernetes · Fine-tuning · Model Serving

Applications & Frameworks

PyTorch · TensorFlow · JAX · ONNX · vLLM · Triton

Popular Applications of AMD ROCm™

Data Monsters builds production-ready ROCm solutions across industries and AI disciplines

LLM Training & Fine-Tuning

Train and fine-tune large language models — Llama 4, DeepSeek, Mistral — on AMD Instinct clusters using ROCm-native PyTorch and distributed FSDP/DDP.

High-Speed Inference

Run vLLM, Triton Inference Server, and ONNX Runtime on AMD GPUs via ROCm. Distributed inference (ROCm 7) cuts token generation costs for production LLM APIs.

Computer Vision & Synthetic Data

Train object detection, segmentation, and generative vision models at scale. ROCm's MIOpen optimizes convolutions for vision transformers and diffusion models.

HPC & Scientific Computing

Run molecular dynamics, climate simulation, and finite element analysis on Frontier-class AMD clusters. ROCm supports Fortran, OpenMP, and MPI out-of-the-box.

RAG Pipelines

Build Retrieval-Augmented Generation pipelines natively on AMD GPUs. ROCm's RAG support (added Sept 2025) enables end-to-end LLM pipelines with real-time knowledge retrieval.

Edge AI & Industrial Deployments

With ROCm 7 expanding to Ryzen laptops and workstations, AI applications can now be developed on desktops and deployed consistently from cloud to edge.

Day-Zero Framework & Model Support

ROCm 7 delivers day-zero compatibility with all major AI frameworks from its first release day – no waiting for updates or patches

ROCm is Now a First-Class Platform in vLLM

ROCm software is now a tier-1 supported platform in vLLM — the leading open-source LLM inference engine. Docker images with full ROCm + vLLM support have been available since January 2026, no source-build required. This makes it seamless to serve Llama, DeepSeek, Mistral, and other models on AMD Instinct GPUs.Data Monsters helps teams integrate vLLM, configure quantization (FP4/FP8/INT4), set up AMD Developer Cloud instances, and fine-tune inference throughput for production workloads.

Get expert ROCm helpTry AMD ROCm Today – No Hardware Required

The AMD Developer Cloud (ADC) gives developers instant access to AMD Instinct™ MI300X GPUs via a browser – pre-configured with ROCm, Docker, and JupyterLab environments. 25 complimentary hours available for qualified developers.

Instant Cloud Access

Login via GitHub, choose from flexible options — custom VMs, pre-built Docker containers, or JupyterLab. Start running ROCm workloads in minutes without any hardware setup.

MI300X – Pay-As-You-Go

Access 192 GB HBM3 AMD Instinct MI300X GPUs on-demand. Ideal for LLM inference testing, fine-tuning experiments, and benchmarking before committing to on-premise hardware.

25 Free Complimentary Hours

AMD provides approximately 25 hours (~$50 USD) of free cloud credit to qualified developers. Apply via the AMD Developer Cloud portal. Data Monsters can help your team maximize these credits effectively.

Data Monsters is Your Best AMD ROCm Implementation Partner

Data Monsters, a Cupertino-based enterprise AI consulting company, has been working with AMD technologies for years. As part of our multi-platform AI expertise, we help funded startups and enterprise R&D teams design and implement AMD ROCm-based software and hardware solutions. Our team combines deep AMD hardware knowledge with hands-on ROCm software deployment experience.

- CUDA-to-HIP porting & migration

- LLM inference optimization on AMD Instinct

- Fine-tuning pipelines (DeepSpeed, FSDP)

- Performance profiling with rocProfiler

- ROCm environment setup & configuration

- MLOps pipeline on Kubernetes with ROCm

- AMD Developer Cloud architecture

- Hybrid AMD + NVIDIA infrastructure