AMD Instinct™

GPU Accelerators

Need help with AMD Instinct GPU-based development?

Data Monsters is your best choice. We help funded startups and enterprise R&D teams design and implement AMD Instinct-based AI software and hardware solutions.

With 17+ years in AI, hundreds of completed projects, and deep AMD hardware expertise, we are ready to accelerate your AI product.

Work with us

17+ years

80+ experts

150+ projects

What are AMD Instinct™ GPUs?

AMD Instinct™ GPUs are the company's datacenter-class AI accelerators, engineered from the ground up for massive-scale parallel compute workloads. Unlike consumer or workstation GPUs, Instinct accelerators use AMD's CDNA (Compute DNA) architecture — optimized entirely for AI compute and HPC, with no gaming graphics overhead.

The product line introduced the MI300X in late 2023 as a groundbreaking unified memory APU-class accelerator. The MI300X was the fastest product ramp in AMD history, adopted by Microsoft Azure, Meta, Oracle Cloud, IBM Cloud, and Dell Technologies within its first year. It delivers 192 GB HBM3 and 5.3 TB/s bandwidth — enabling organizations to run large models like Llama 3 70B or GPT-4-class architectures on a single node.

In 2025, AMD launched the MI350 Series (CDNA 4) — a complete ground-up redesign on TSMC N3P with 185 billion transistors, supporting new FP4/FP6 data types and delivering up to 35× inferencing improvement versus MI300. In 2026, AMD and Meta announced a 6-gigawatt strategic partnership deploying a custom MI450-based GPU optimized for Meta's inference workloads at gigawatt scale.

AMD Instinct™ MI350 Series – Leadership AI & HPC Acceleration

Official AMD overview of the MI350 Series GPUs – built on CDNA 4, designed for training massive AI models, high-speed inference, and complex HPC workloads.

AMD Instinct™ GPU Accelerator Lineup

From the revolutionary MI300 Series to the latest CDNA 4 accelerators and the forthcoming MI450 for Meta — the full AMD Instinct roadmap.

AMD Instinct™

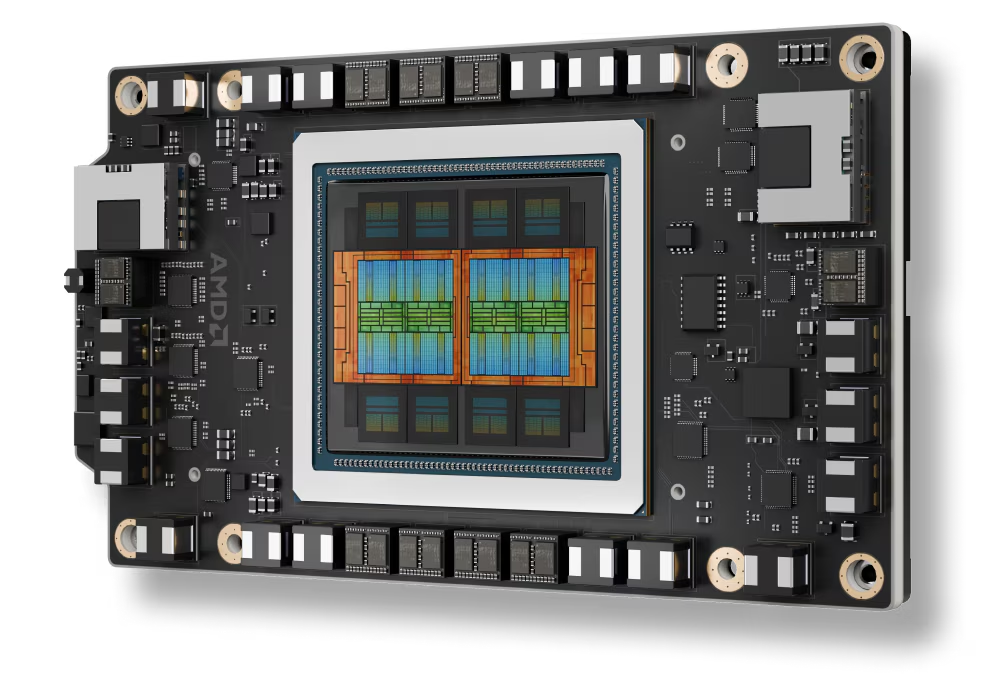

MI300X

CDNA 3 Architecture · 5nm TSMC

Memory

192 GB HBM3

Bandwidth

5.3 TB/s

FP16 / BF16

1,307 TFLOPS

FP8

2,614 TFLOPS

The fastest product ramp in AMD history. Adopted by Microsoft Azure, Meta, Oracle, IBM Cloud, and Dell. Ideal for LLM inference — capable of running 70B+ parameter models on a single GPU. Available via AMD Developer Cloud.

AMD Instinct™

MI325X

CDNA 3 Architecture · Mid-Cycle Upgrade

Memory

256 GB HBM3E

Bandwidth

6.0 TB/s

BF16

1,307 TFLOPS

FP8

2,615 TFLOPS

An upgraded MI300X successor with 256 GB HBM3E memory (first AI GPU to reach this threshold) and 6 TB/s bandwidth via 16-Hi stacks. Supports matrix sparsity acceleration and delivers 30%+ performance vs NVIDIA H100 on AI workloads.

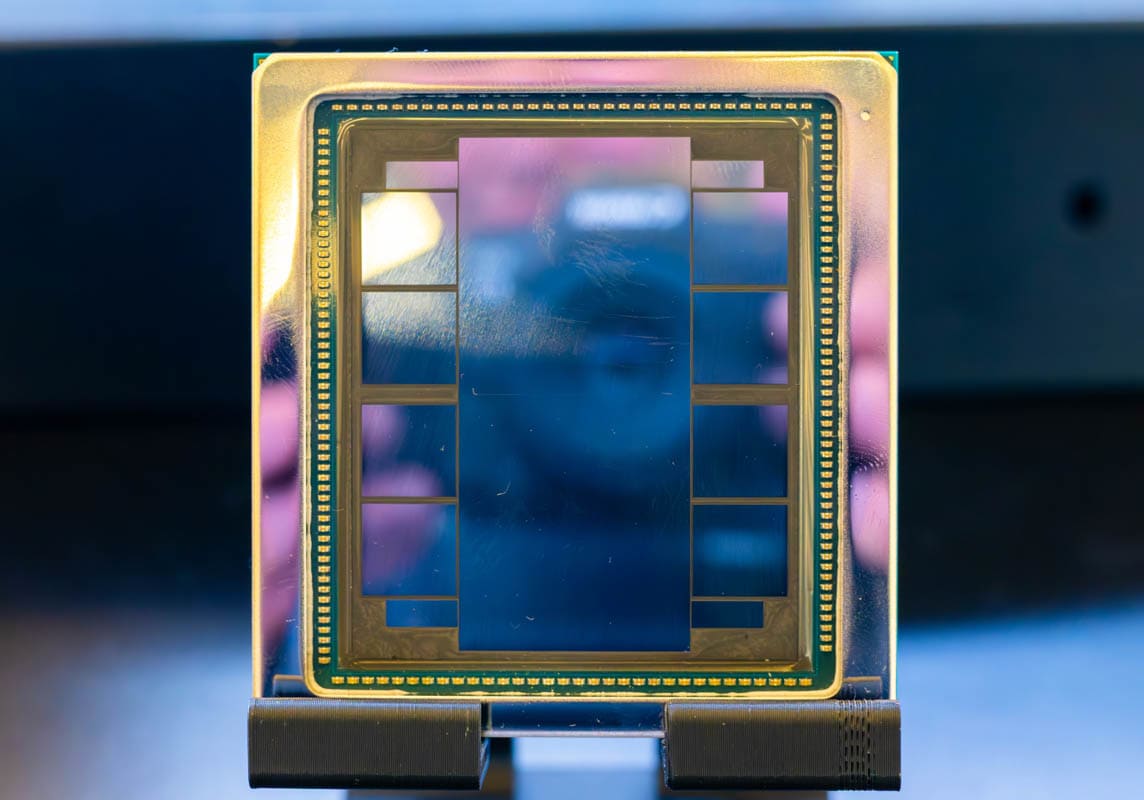

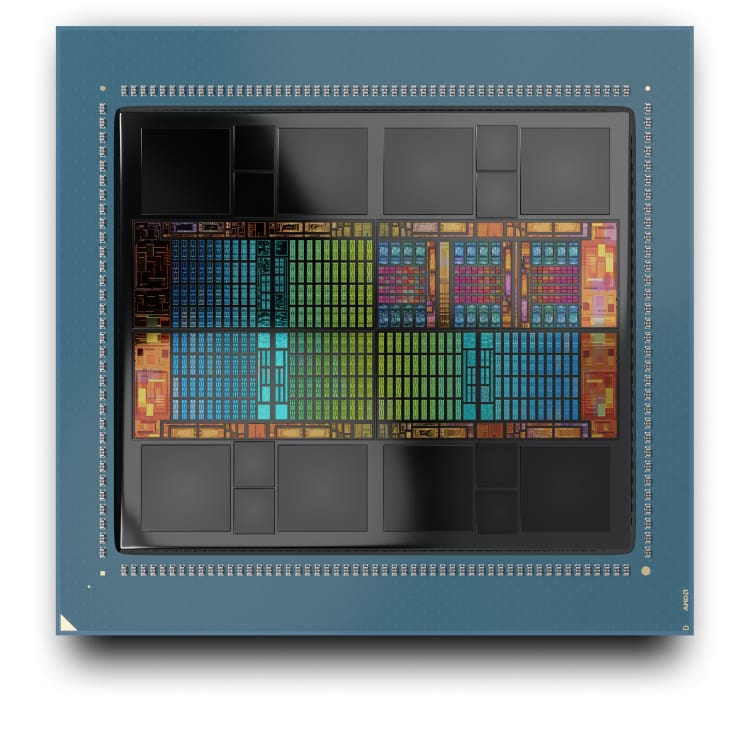

AMD Instinct™

MI350X / MI355X

CDNA 4 Architecture · 3nm TSMC (N3P)

Memory

288 GB HBM3E

Bandwidth

8.0 TB/s

FP4 (MI355X)

20 PFLOPS

Transistors

185B

Ground-up CDNA 4 redesign launched at Advancing AI 2025. Delivers 35× the inferencing performance of MI300 Series. MI350X is air-cooled (1,000W TDP); MI355X is liquid-cooled (1,400W TDP). Up to 128 GPUs / 36 TB HBM3E in rack configuration.

AMD Instinct™

MI450

(Meta Custom)

CDNA 5 Architecture · 2nm-class TSMC

Process Node

TSMC 2nm-class

Architecture

CDNA 5 / Helios

Deployment

Meta · 6 GW

Shipments

H2 2026

Co-engineered with Meta through the Open Compute Project on the AMD Helios rack-scale architecture. Optimized for Meta's inference workloads — competing directly with NVIDIA Vera Rubin. Part of the 6-gigawatt, multi-year AMD–Meta strategic partnership announced February 2026.

AMD Instinct™ GPU Specifications Comparison

Detailed technical comparison across the AMD Instinct product family – as of March 2026.

Why AMD Instinct™ for Enterprise AI

AMD Instinct GPUs deliver unique architectural advantages that make them the ideal choice for open, scalable enterprise AI deployments.

Massive HBM Memory

AMD Instinct leads the industry in GPU memory capacity. MI355X packs 288 GB HBM3E at 8 TB/s — enabling enterprises to fit the largest LLMs (70B+ parameters) in GPU memory and dramatically reduce inference latency by eliminating CPU-GPU data movement.

AMD Infinity Fabric™ Interconnect

4th Gen AMD Infinity Fabric links up to 8 MI325X OAMs in a fully connected topology delivering 1,075 GB/s GPU-to-GPU bandwidth. At scale, the MI355X Orv3 rack aggregates 128 GPUs into 36 TB of unified HBM3E — ideal for trillion-parameter model training.

Open Ecosystem

No Vendor Lock-In

AMD Instinct GPUs pair with ROCm — a fully open-source software stack. Unlike NVIDIA's proprietary CUDA, your team retains full control over the software, the deployment pipeline, and the hardware choice. ROCm Enterprise AI is free of charge.

FP4 / FP6 Precision Support

CDNA 4 introduces FP4 and FP6 data types — ultra-low precision formats that dramatically increase inference throughput while maintaining model quality. MI355X achieves 20 PFLOPS FP4, enabling cost-effective deployment of the most demanding generative AI models.

OCP / OAM Standard Design

AMD Instinct accelerators use Open Compute Project (OCP) OAM module standards — making them compatible with standard rack infrastructure and allowing mix-and-match server deployments. The AMD Helios rack-scale architecture extends this to the rack level.

Leadership Price-Performance

Multiple independent benchmarks show MI300X delivering 30%+ higher performance than NVIDIA H100 on AI workloads. For enterprises operating at scale, AMD Instinct's open ecosystem and competitive pricing translate directly into lower total cost of ownership.

AMD Instinct™ Annual Innovation Cadence

MD committed to an annual GPU release cadence for Instinct – delivering generation-over-generation leadership performance every year.

AMD Instinct MI300X + MI300A

CDNA 3 · 5nm TSMC

Launched in December 2023. The MI300X became the fastest product ramp in AMD's history, adopted immediately by Microsoft Azure, Meta, Oracle Cloud, Dell, HPE, and Lenovo. The MI300A introduced the world's first unified HPC APU.

AMD Instinct MI325X

CDNA 3+ · Mid-Cycle Upgrade

First AI GPU with 256 GB HBM3E memory. Launched Q4 2024 with 6 TB/s bandwidth and matrix sparsity support. Server solutions from leading OEMs began availability Q1 2025. Supports up to 2 TB HBM3E in 8-GPU OAM platforms.

AMD Instinct MI350X / MI355X

CDNA 4 · 3nm TSMC (N3P) · 185B transistors

Launched at Advancing AI 2025. Ground-up CDNA 4 redesign with 35× inference improvement, 288 GB HBM3E, 8 TB/s, and FP4/FP6 support. The MI355X rack (128 GPUs, 36 TB HBM3E) delivers 2.6 exaflops FP4 — competing directly with NVIDIA's Blackwell B200/GB200.

AMD Instinct MI450 (Meta Custom) + MI400 Series

CDNA 5 / Helios · 2nm-class TSMC

The MI450 is co-engineered by AMD and Meta on the AMD Helios rack-scale architecture (Open Compute Project). Powered by CDNA 5 on a 2nm-class TSMC node, it targets inference workloads and the 6 GW Meta deployment. MI400 Series with 432 GB HBM4 and 40 PFLOPS FP4 is expected for 2026.

AMD Instinct GPU Use Cases

Data Monsters delivers production-ready AMD Instinct solutions across the full spectrum of enterprise AI and HPC workloads.

LLM Training at Scale

Train Llama 4, DeepSeek, Mistral, and custom foundation models on AMD Instinct clusters using ROCm + PyTorch FSDP/DDP. AMD's 288 GB HBM3E eliminates model-parallel overhead for 70B+ parameter models.

High-Throughput LLM Inference

Serve production LLM APIs with vLLM, Triton, or TGI on AMD Instinct — FP4/FP8 quantization maximizes tokens-per-second. ROCm 7 distributed inference decouples prefill/decode for reasoning model cost optimization.

Scientific HPC & Simulation

Run molecular dynamics, climate modeling, finite element analysis, and quantum chemistry simulations on AMD Instinct clusters. Frontier (world's first exaflop supercomputer) runs AMD Instinct MI250X GPUs.

Computer Vision & Synthetic Data

Train and deploy real-time object detection, video analytics, diffusion-based image generation, and synthetic data pipelines. Data Monsters has built multiple computer vision products on AMD hardware.

Speech AI & Multimodal Models

Build real-time ASR, TTS, and multimodal AI (vision-language models) on AMD Instinct. ROCm's full Hugging Face integration gives access to 1.8M+ models including Whisper, CLIP, and LLaVA variants.

Manufacturing & Industrial AI

Deploy predictive maintenance, quality inspection, and digital twin AI applications on AMD Instinct infrastructure. Data Monsters has built computer vision analytics for manufacturing with AMD hardware partners like OnLogic.

AMD & Meta: A 6-Gigawatt AI Partnership

In February 2026, AMD and Meta announced a landmark multi-year, multi-generation strategic partnership to deploy up to 6 gigawatts of AMD Instinct GPUs. The first deployment will use a custom AMD Instinct GPU based on the MI450 architecture — co-designed with Meta on the AMD Helios rack-scale architecture developed through the Open Compute Project.

The systems will pair the custom MI450-based GPU with 6th Gen AMD EPYC™ CPUs (codenamed "Venice"), running ROCm™ software. Shipments for the first gigawatt deployment are expected to begin H2 2026. This partnership signals AMD Instinct's position as the leading open alternative to NVIDIA for hyperscale AI infrastructure.

Build on AMD InstinctData Monsters is Your Best AMD Instinct Implementation Partner

Data Monsters, a Cupertino-based enterprise AI consulting company, brings deep expertise in AMD Instinct GPU deployment for AI and HPC. We help funded startups and enterprise R&D teams build and deploy GPU-accelerated AI products — from initial architecture through production deployment. As part of our multi-vendor AI platform expertise, Data Monsters provides AMD Instinct-based solutions alongside our NVIDIA offerings, giving clients hardware-optimized AI with genuine freedom of choice.

- AMD Instinct cluster architecture & sizing

- LLM inference serving (vLLM, Triton, TGI)

- CUDA-to-ROCm workload migration

- AMD Developer Cloud setup & optimization

- ROCm environment setup on MI300X / MI350

- Model fine-tuning pipelines on AMD GPUs

- Hybrid AMD + NVIDIA infrastructure

- Performance benchmarking & TCO analysis