AMD and Data Monsters: Building the AI Developer Community in 2025

Some of the most exciting progress in AI happens not just inside large research labs, but out in the open – when developers around the world get their hands on real hardware and real problems. That belief is one AMD and Data Monsters share, and it became the foundation of our work together throughout 2025.

AMD runs an active global developer program, and in 2025 we were proud to be brought on board to help organize and run three of their challenges — each with a unique focus, from writing bare-metal GPU inference kernels to building physical robot arms in 48-hour hackathons. Working alongside AMD and a broader coalition of partners, our role was to help bring AMD's vision to life: designing the contest mechanics, coordinating infrastructure, managing communications, and ensuring that developers had everything they needed to compete at their best.

This is the story of what we helped build.

Why AMD Invests in the Developer Community

AMD's rise in AI infrastructure – with its Instinct™ GPU lineup and open ROCm™ software stack – is well documented. But AMD's ambitions extend well beyond selling chips. The company has made a clear strategic bet on developer enablement: by staging rigorous competitions, AMD accelerates real-world optimization of its stack, surfaces elite engineering talent, and builds a community that is deeply and practically familiar with AMD technology.

These contests turn abstract hardware architecture into measurable, reproducible improvement. Every submitted kernel and every robot demo contributes to AMD's understanding of how its products perform under real pressure – and generates open-source knowledge that benefits the entire ecosystem. For Data Monsters, a firm whose mission is built around making AI work in the real world, this was exactly the kind of initiative we wanted to be part of.

Contest 1: Inference Sprint

AMD Developer Challenge 2025 – April–June 2025

Partners: AMD × Data Monsters × GPU Mode

The year's first challenge opened in April 2025 with a precise mandate: write the fastest possible AI inference kernels for AMD Instinct™ GPUs. This was a contest for the genuinely ultra-technical – teams of up to three developers who live in the world of GPU code and performance optimization. Three kernel categories were on the table:

- MLA with RoPE – the attention mechanism powering modern reasoning architectures, including Rotary Positional Encoding (up to 1,500 points)

- Fused MoE – token-routing operations for Mixture-of-Experts models like Mixtral and DeepSeek (up to 1,250 points)

- FP8 GEMM – matrix multiplication in 8-bit floating point for maximum throughput (up to 1,000 points

The contest ran on the GPU Mode Discord server, using the KernelBot automation platform — which executed each submission on AMD cloud GPUs, measured performance against the baseline, and updated a live public leaderboard in real time. GPU Mode, co-founded by Mark Saroufim of Meta, is one of the world's most active ML systems communities, and KernelBot provided a uniquely transparent and rigorous evaluation environment for participants.

Scoring was unforgiving by design: any submission that failed to beat the PyTorch baseline received zero points. Registration opened April 9, the submission window ran April 15 – June 8, and a total of $150,000 in prizes was on offer, anchored by a $100,000 grand prize. The top teams were invited to AMD's Advancing AI Day in San Jose on June 12, where AMD CEO Dr. Lisa Su personally honored the winners on stage. The grand prize went to the team of Zesen Lu, Yankui Wang, and Yingyi Hao — researchers who pushed AMD hardware closer to its theoretical performance roofline than anyone had before.

Data Monsters supported the challenge through promotion, participant communications, and technical coordination throughout the submission window.

Contest 2: Distributed Inference Kernels

AMD Developer Challenge 2025 – August–October 2025

Partners: AMD × Data Monsters × GPU Mode

If the spring sprint was about pushing a single GPU to its limits, the fall edition raised the complexity by an order of magnitude: multi-GPU communication kernels for distributed large language model inference. As LLMs scale beyond what any single chip can handle, the efficiency of inter-GPU communication becomes just as critical as raw compute – and this was the frontier AMD put in front of the community.

Participants tackled three foundational distributed operations on a single node with 8× AMD Instinct™ MI300X GPUs:

- All-to-All — token routing for distributed Mixture-of-Experts models

- GEMM + ReduceScatter — fused matrix multiply with tensor-parallel reduce-scatter

- AllGather + GEMM — fused all-gather and matrix multiply for parallel inference

Registration ran August 23 – September 20, with submissions accepted through October 13. The response was extraordinary: over 600 developers participated, generating more than 60,000 code submissions and peaking at 5,000 test runs per day – a scale that speaks to how energized the AMD developer community has become.

The winning teams were announced at AMD AI DevDay 2025 in San Francisco on October 20, held during Open-Source AI Week:

- 🏆 Grand Prize ($100,000): RadeonFlow

- 🥇 1st Place ($25,000): Cabbage Dog

- 🥈 2nd Place ($15,000): Epliz

- 🥉 3rd Place ($10,000): Team Ganesha

The contest generated not just winners, but a body of genuinely novel open-source work. Teams published detailed technical write-ups on how they overlapped communication and computation on MI300X – knowledge that anyone working with AMD GPUs can build on directly. Data Monsters coordinated AMD Developer Cloud resources, managed the participant Q&A pipeline, and ensured the infrastructure ran smoothly throughout.

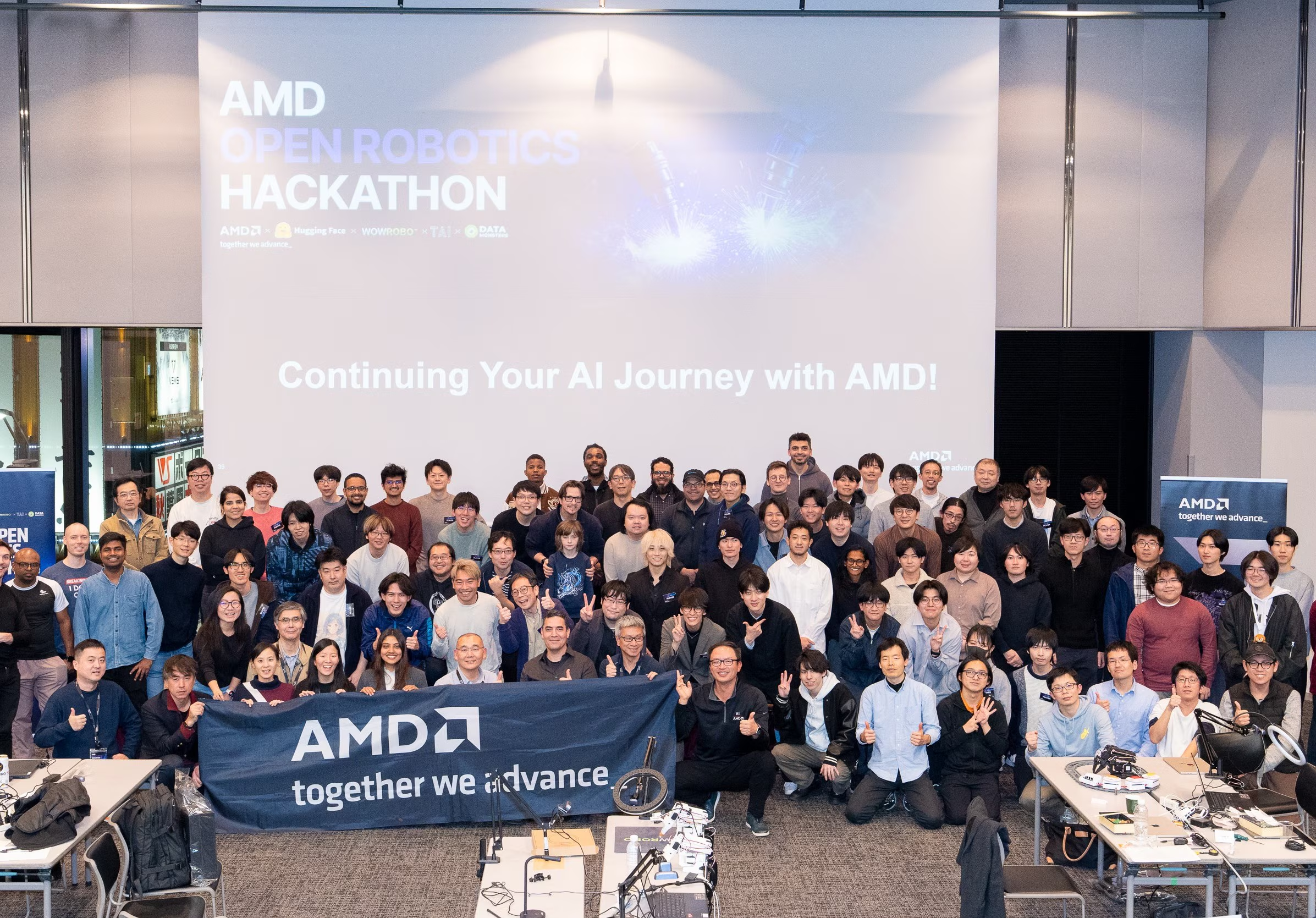

Contest 3: Open Robotics Hackathon

AMD × Hugging Face × Data Monsters × WOWROBO × TAI

Tokyo: December 5–7, 2025 | Paris: December 12–14, 2025

The year's final challenge was a complete change of pace – and the most viscerally exciting of the three. The AMD & Hugging Face Open Robotics Hackathon brought the competition off the screen and into the physical world, running consecutive events in Tokyo and Paris in December. The central technology was Hugging Face LeRobot – the open-source library for training and deploying robot control policies using modern ML – running on AMD hardware from edge to cloud.

Two Missions

Teams of 2–4 worked through two consecutive challenges:

- Mission 1 – Pick and Place (Day 1)

An instructor-led onboarding workshop where teams set up the full LeRobot development environment end-to-end on AMD's stack. The task was deliberately straightforward: pick up a small object – a LEGO brick, a pen – and place it in a bin. Simple enough to complete in a day, rigorous enough to confirm the entire pipeline was operational. - Mission 2 – Freestyle Build (Days 2–3)

Teams defined and built their own real-world robotic application, with overnight access to the venue for those who needed it. Only Mission 2 was judged for prizes, scored on a 100-point scale

All winning teams were required to publish their full code, training datasets, trained models, and demo videos publicly on GitHub — ensuring every breakthrough could benefit the wider community.

The Hardware Stack

AMD equipped every participant with:

- SO-101 robot arm kits for hands-on prototyping

- AMD Ryzen™ AI laptops for on-device edge inference

- AMD Developer Cloud access with AMD Instinct™ MI300X GPUs for model training

- Up to $300 per team in hardware reimbursement for equipment purchased after the contest announcement

Prizes (Per City):

- 🥇 First place: $10,000

- 🥈 Two second-place teams: $5,000 each

- 🥉 Five third-place teams: $2,000 each

A Year of Building with AMD

Three contests. Three formats. Hundreds of developers across two continents. Looking back at 2025, this body of work with AMD stands as a genuine highlight for the Data Monsters team – not just as a business milestone, but as a reminder of what becomes possible when a hardware leader opens its ecosystem to the world and invests seriously in the people building on top of it.

Whether it was a researcher squeezing the last nanosecond out of an attention kernel, a distributed systems engineer rethinking how GPU clusters communicate, or a robotics team training a robot arm through the night in a Paris venue – every participant moved the AMD ecosystem forward in a real, measurable way. We're proud to have played a role in making that happen, and we're already looking forward to what comes next!